Trying to Detect Fraud and Scams Won't Save You Anymore

A new wave of fraud doesn't start with a generic lure. It starts with your face, your numbers, and your most recent post.

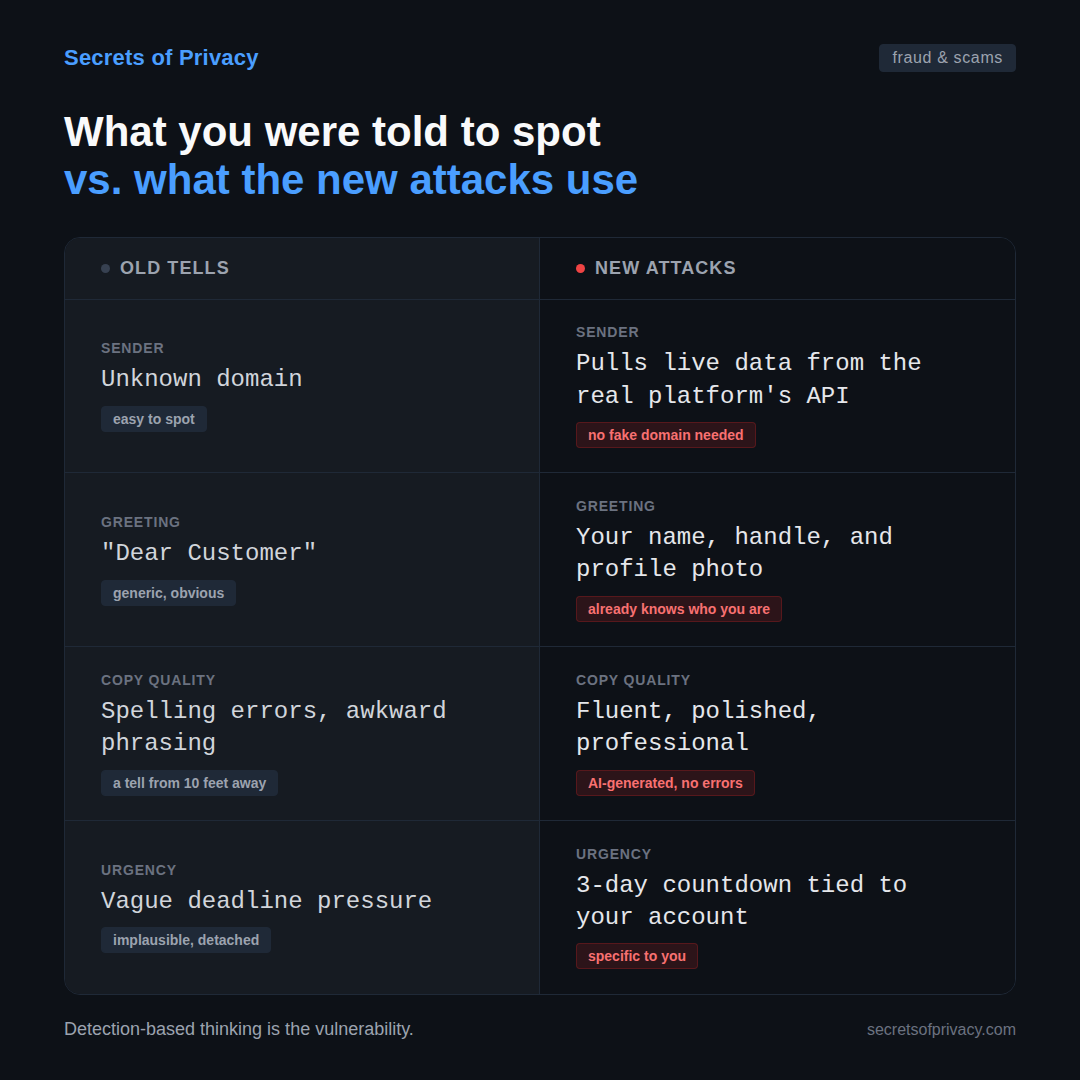

To avoid email scams, you were told to watch for generic greetings, suspicious senders, and bad grammar. New attacks and scams have evolved, and now skip all of that.

Instead, they open with your actual profile photo, your real follower count, and a thumbnail of your most recent post. Everything in the email looks legitimate because the attacker pulled your public data before sending you anything.

This comes from a phishing campaign documented this week by Malwarebytes that pulls real YouTube channel data to build a personalized copyright scare page. The moment you land on it, the page already knows your avatar, your subscriber count, and your most recent video. If you enter your credentials on the fake Google sign-in it serves up, you lose your entire Google account.

And while this particular scam focuses on YouTube, it can equally apply to any number of other platforms, from Facebook to Instagram to Substack. Creators are especially at risk.

This scheme runs like a global hacker franchise. Multiple attackers share the same phishing kit, each running their own campaigns with their own affiliate ID embedded in the links. One operator can swap out the destination domain at any time to evade takedowns. That means the infrastructure stays live even when individual phishing pages get flagged.

There’s also a detail that says a lot about how professionally these operations are being run. The scam automatically screens out any channel with more than three million subscribers, instead showing those creators a clean bill of health rather than a scare page. The reason is simple if you think about it:

Large creators are more likely to have security staff, YouTube contacts, or the visibility to get the operation shut down quickly. Smaller creators, who have less protection and just as much to lose, are the higher value target.

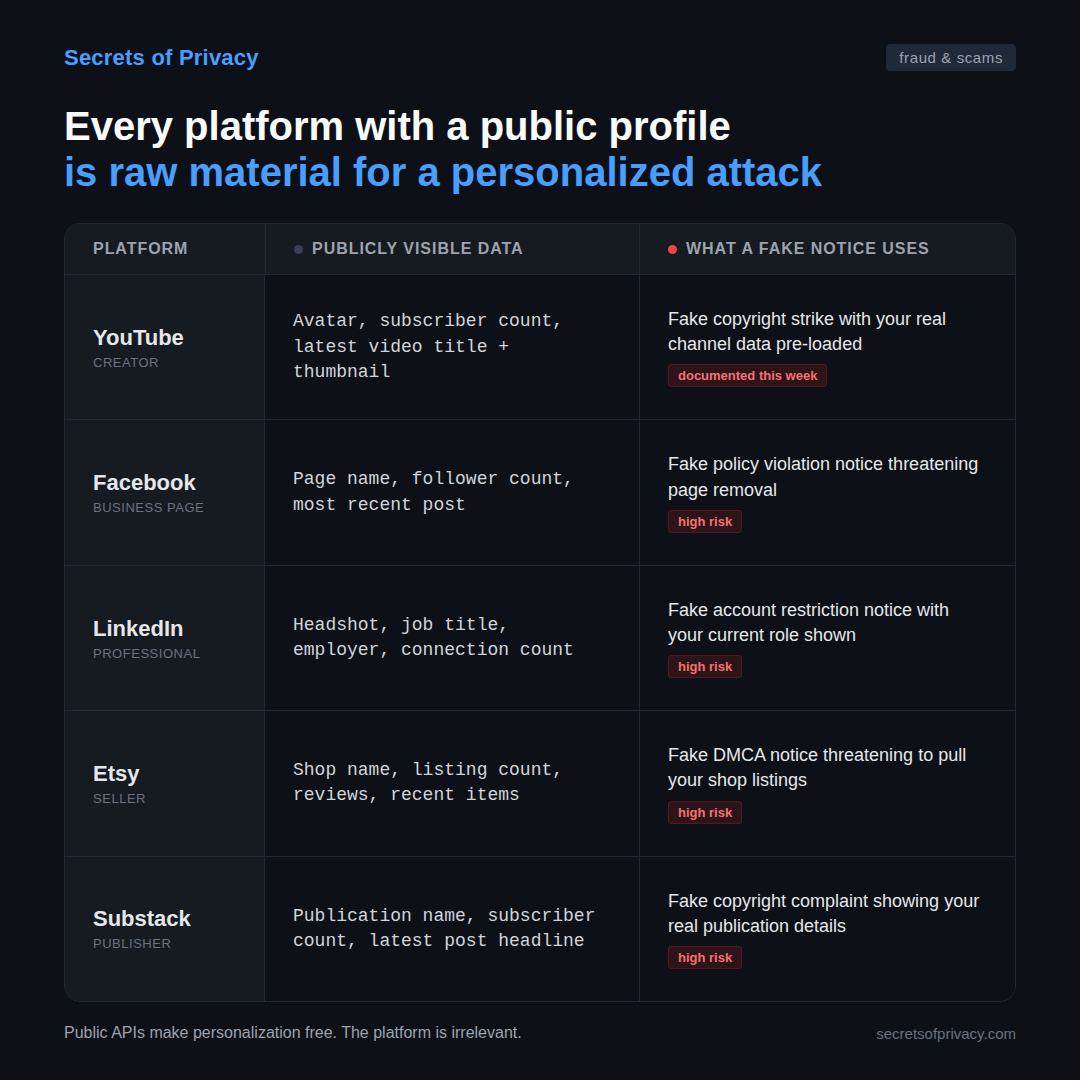

The reason this scam works is that YouTube has a public API. In case you think this is just a YouTube problem, public APIs are not unique to YouTube. Any platform that displays your profile photo, follower count, and recent activity to the world gives an attacker the raw material to build a personalized scare page.

Facebook business pages, LinkedIn profiles, Etsy storefronts, Substack publications all expose enough public data to run the same scheme. A fake “your Facebook page has been flagged for removal” notice showing your page name, your follower count, and your most recent post would be just as convincing to a small business owner as the YouTube copyright notice is to a creator.

The YouTube scam is not occurring in isolation but sits inside a larger scam pattern.

Voice cloning has crossed what researchers at the University at Buffalo describe as the “indistinguishable threshold.” Some major retailers are already reporting over 1,000 AI-generated scam calls per day.

The family emergency call, the one where you hear your daughter’s voice saying she’s been in an accident, is no longer a theoretical risk. It’s running at scale, and it runs on a few seconds of audio pulled from a public video or voicemail. Experian’s 2026 fraud forecast describes intelligent bots carrying out automated family-member-in-need scams with a sophistication that wasn’t possible even two years ago.

Both of those attacks share the same logic as the YouTube scam. They start with real data about you, pulled from public sources, and use it to make the approach feel legitimate before you’ve had a chance to think.

There’s a separate but related story worth knowing about too. A credential-stealing malware called Omnistealer revealed the other day hides its attack code inside blockchain transactions on networks like TRON and Binance Smart Chain. Because blockchains are append-only, those malicious snippets are effectively permanent once they’re mined into a block. You can take down a malicious GitHub repo or revoke a domain, but you can’t roll back TRON to remove a few hundred bytes of malware staging code.

The campaign has been linked by on-chain forensics to (no surprise!) North Korean state-sponsored actors. It spread through fake developer job offers on LinkedIn and GitHub where technically skilled targets handed what looked like a routine freelance project.

While the personalization angle is less pronounced here, what Omnistealer illustrates is the other half of the same shift:

sophisticated fraud operations are not only getting better at targeting people, they’re getting better at making their infrastructure difficult, if not impossible, to shut down. The attacks are more convincing and more resilient at the same time.

That combination is what makes this moment different from previous waves of online fraud.

For most of the history of online fraud, the attacker’s main constraint was personalization. A phishing email that addressed you by name was already considered sophisticated. And a scam call that knew where you worked was alarming.

Building a scam that looked genuinely specific to you (your face on the page, your voice in the audio or your channel data in the copyright notice) required real effort and real resources. That constraint kept the scam volume down. It limited who could run these operations and how many people they could reach.

That constraint is gone, and it’s not coming back.

The YouTube copyright kit fetches your real data automatically from a public API. Voice cloning tools require a few seconds of audio, freely available for most people who have ever posted a video or left a voicemail. The personalization layer is now a commodity. Anyone with modest resources can build an attack that feels like it was made specifically for you, because technically, it was.

What legislators and most security advice haven’t caught up to yet is that the old detection signals no longer work. You were told to look for misspellings, generic greetings, implausible urgency. An email that called you “Dear Customer” was a tell. None of that applies anymore. The new signals are different, and defending against them requires a different posture.

How to Defend Yourself

The defense move here isn’t about being more skeptical of suspicious-looking messages. That’s because messages won’t look suspicious. The defense is structural, which means developing habits and configurations that hold up even when the attacker has already done their homework on you. Here’s how to do that.