Your Workday Is Now AI Training Data

Workplace activity and employee data have become a tradeable asset.

Last week Reuters reported that Meta is rolling out new software on its US employees’ work computers to capture mouse movements, clicks, and keystrokes. The stated purpose has nothing to do with productivity monitoring or security.

Meta wants training data for the AI agents it’s racing to ship.

A company spokesperson confirmed as much directly, saying:

the models need real examples of how people actually use computers, and that the best way to get those examples is to watch employees at work.

This is the third announcement like this in four months. Workplace activity is becoming AI training data, and most workers are still thinking about workplace surveillance the way they thought about it five years ago. That model is out of date, and here’s why.

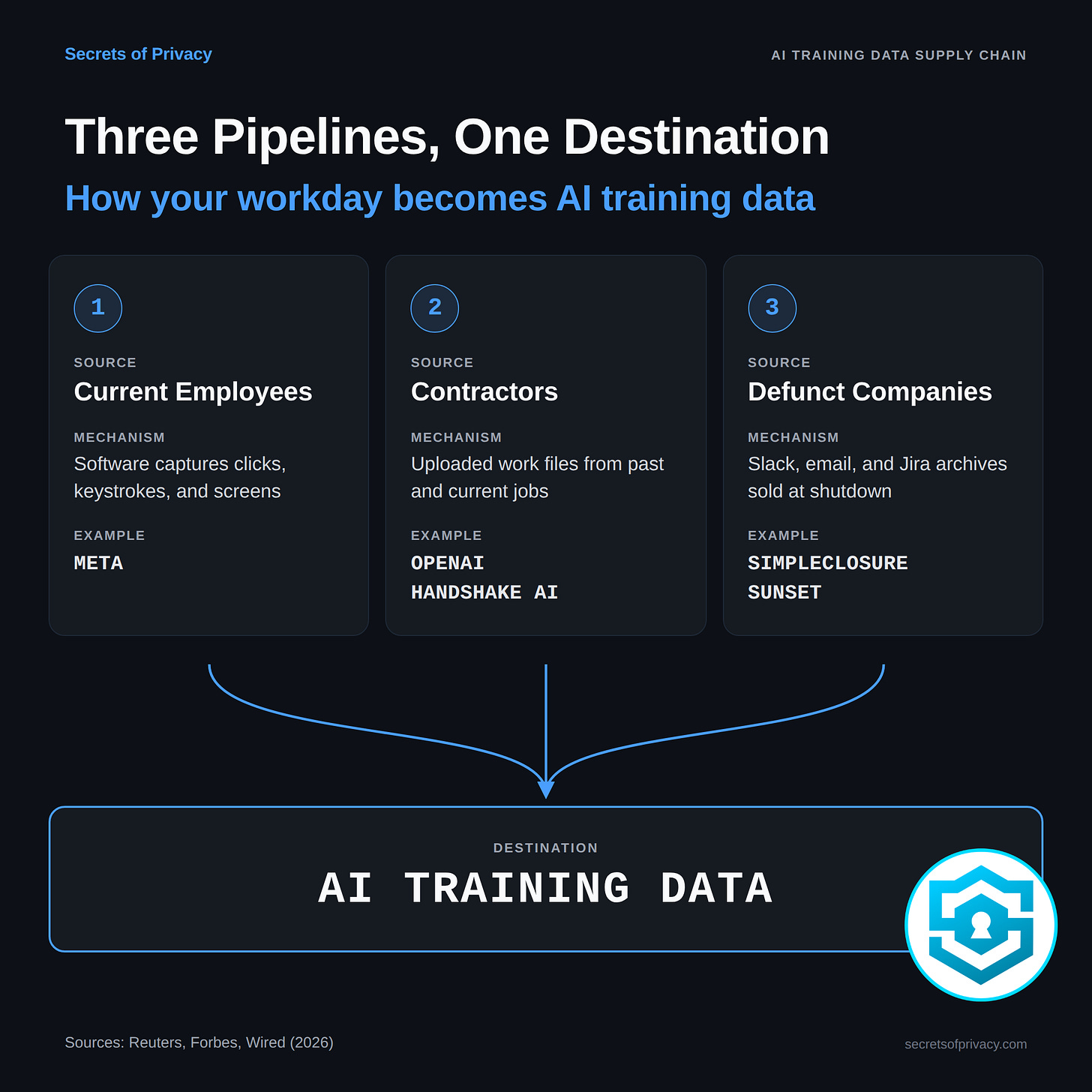

Three Pipelines, One Supply Chain

Three distinct streams are feeding into the same data supply chain.

The first is active collection from current employees. Meta is the highest-profile example. Any employer large enough to train its own AI models has an obvious incentive to start here, because in-house employee data is free and already flowing through company systems.

The second pipeline runs through contractors. In January, Wired reported that OpenAI's data vendor Handshake AI started asking its freelance contractors to upload real work products from their past and current jobs. Actual contracts, financial models, decks, and code repositories produced for other employers. The point of collecting these documents is to feed them into OpenAI's training data, where they become part of the model weights. Handshake provides a tool called "Superstar Scrubbing" to help contractors remove confidential information before uploading. The original employers and clients are not in the loop.

The third is the dead-company market. On April 16, a startup called SimpleClosure launched a platform called Asset Hub that helps shutting-down companies monetize what its CEO, Dori Yona, calls “operational exhaust.”

The full archive of a defunct company (years of Slack messages, Jira tickets, email chains, Google Drive folders) gets bundled and sold to AI labs as training material. Per Forbes, SimpleClosure has processed nearly 100 of these deals in the past year, with payouts ranging from $10,000 to $100,000 per company. A competitor called Sunset is doing the same thing.

The first company to go through this at scale was cielo24, a transcription business that shut down after thirteen years. Its former CEO, Shanna Johnson, sold every Slack message, internal email, and Jira ticket for what she described as hundreds of thousands of dollars.

It Isn’t Just Meta, and It Isn’t Just Engineers

A reasonable response at this point is that these are three tech-industry stories about tech-industry companies, which doesn’t necessarily say much about the average worker. I don’t think that holds up, and a viral video from 2024 is a decent way to show why.

The post hit four million views because people found it viscerally strange.

But the software running in that coffee shop wasn’t custom-built. It was sold to the cafe by a vendor, which means the same vendor is selling it to other cafes, other retail operations, other small businesses.

Each of those installations generates data about how humans interact with physical workspaces, which happens to be exactly the kind of embodied-workflow training data AI labs are short on. And the worker making the coffee has even less leverage than the Meta engineer to negotiate what happens to any of it.

The Breach Problem Nobody Is Pricing In

Every company involved in this supply chain says it strips personally identifiable information before the data changes hands. SimpleClosure says it, OpenAI says it, Meta says it. The claim is that your name, email, and phone number come out before the archive goes to the buyer.

That’s the wrong thing to focus on.

A Slack archive with names removed still contains your writing style, your project details, your references to specific coworkers and clients, your internal jokes, and the content of what you actually said. ‘Anonymized’ doesn’t mean what it did 50 years ago. For nearly 20 years, it’s meant something closer to ‘lightly disguised’ when in the right (wrong?) hands. More to the point, the content itself is the sensitive material.

A complaint about a manager.

A disclosure of a medical condition.

A complaint about a client.

A half-formed opinion about a competitor.

These don’t need your name attached to be damaging, and PII scrubbing doesn’t touch them.

There’s also a concentration problem.

An AI lab holding archives from a hundred defunct companies is a bigger breach target than any one of those companies ever was. If that lab gets breached, the fallout hits every former employee of every source company at once, years after they’ve moved on. The risk isn’t contained by the original employer closing its doors. It’s been handed off to a third party who now has every reason to keep the data and every reason to keep buying more.

Workplace Monitoring Used to Have An End Point

What feels different about this moment is that the purpose of workplace monitoring has shifted.

Traditional workplace monitoring existed to inform management decisions.

Was Kelly productive enough? Should she be promoted? Should the team be restructured?

The data was analyzed inside the company, for the company’s own use. It was invasive, but it was somewhat bounded.

What’s happening now operates on a different level.

Workplace activity is being harvested as a commodity that sells on an open market. The data doesn’t stay within the company that collected it. It feeds models that get deployed into thousands of other workplaces. The worker who clicks through a dropdown menu on a Meta laptop today is effectively helping train the AI that will, in two or three years, replace some other worker at some other company. And that worker hasn’t been offered anything in exchange.

There’s no opt-out in most of this. Most employment agreements don’t address it at all (yet). The IP assignment language employees signed when they took their jobs was written on the assumption that the employer might use work product internally. Nobody was anticipating the secondary market in employee work product.

Your Defensive Playbook

The rest of this piece focuses on what you can actually do, because there are meaningful moves available.

Most employers haven’t worked out their own policies on this yet, which means there’s a window of time where individual pushback can shape outcomes. And even solo workers without any collective bargaining power can meaningfully limit their exposure, if they know what to look for.